- Escaping V8 Sandbox via WebAssembly JIT Spraying (V8 < 10.6.24)

- Escaping V8 Sandbox via WebAssembly JIT Spraying: Part 2 (11.0.4 <= V8 < 12.2.170)

In Part 1, I demonstrated how to escape the V8 sandbox by overwriting the call target stored in the WasmInternalFunction object. This technique was eventually mitigated when V8 moved the call target pointer into the External Pointer Table, rendering it immutable from within the sandbox.

In this follow-up post, I will introduce an alternative technique that exploits the WebAssembly lazy compilation mechanism. By corrupting the jump_table_start field within the WasmInstanceObject—which remained exposed in the sandbox in the affected versions—we can hijack control flow and redirect execution to our JIT-sprayed shellcode.

Setup

- Ubuntu 22.04

8cf17a14a78cc1276eb42e1b4bb699f705675530(Jan 4, 2024)

Run v8setup.py in your working directory.

Analysis

Calling WebAssembly Function

d8.file.execute("v8/test/mjsunit/wasm/wasm-module-builder.js"); |

The JavaScript code above creates a WebAssembly module containing three functions, and calls the first one.

The JavaScript function call is handled by Builtins_CallFunction_ReceiverIsAny(), which is generated by Builtins::Generate_CallFunction_ReceiverIsAny().

31 | void Builtins::Generate_CallFunction_ReceiverIsAny(MacroAssembler* masm) { |

2567 | // ----------- S t a t e ------------- |

Builtins::Generate_CallFunction() calls MacroAssembler::InvokeFunctionCode() to generate the function invocation sequence.

3489 | switch (type) { |

MacroAssembler::InvokeFunctionCode() calls MacroAssembler::JumpJSFunction() if type is InvokeType::kJump.

2813 | // When the sandbox is enabled, we can directly fetch the entrypoint pointer |

MacroAssembler::JumpJSFunction() calls MacroAssembler::LoadCodeEntrypointViaCodePointer() to emit instructions that fetch the entrypoint pointer.

28 | // This class does not use the generated verifier, so if you change anything |

581 | // Code pointer handles are shifted by a different amount than indirect pointer |

600 | constexpr int kCodePointerTableEntrySize = 16; |

614 | void MacroAssembler::LoadCodeEntrypointViaCodePointer(Register destination, |

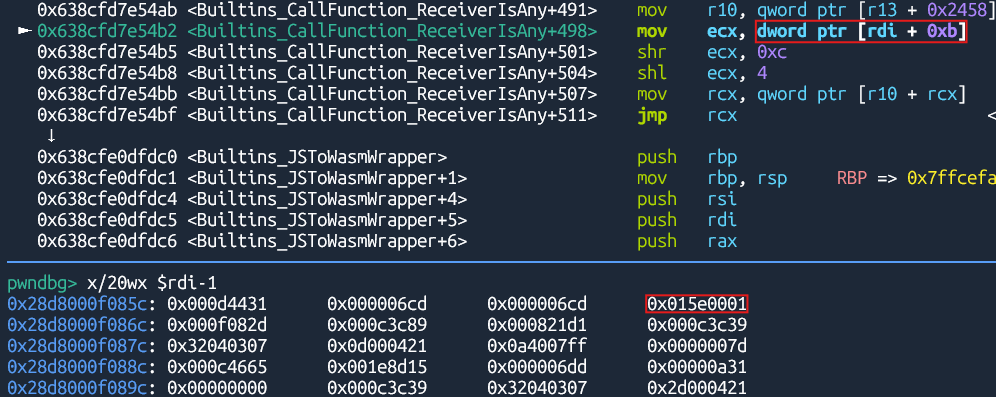

Builtins_CallFunction_ReceiverIsAny() reads the code pointer handle stored in the code field of the Function object. It converts the handle into an index to access the corresponding entry of the function in the code pointer table.

84 | std::atomic<Address> entrypoint_; |

The CodePointerTableEntry class has two fields: entrypoint_ and code_. The entrypoint_ field holds the entrypoint address, and the code_ field holds the address of the Code object corresponding to the function.

2818 | DCHECK_EQ(jump_mode, JumpMode::kJump); |

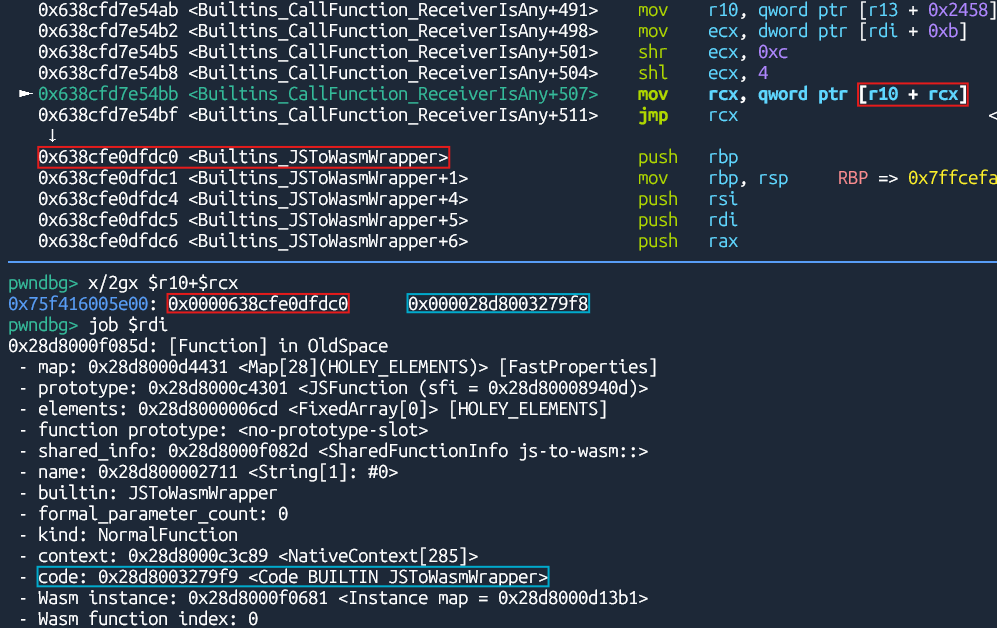

After getting the entrypoint address, Builtins_CallFunction_ReceiverIsAny() jumps to that address, where the generic JS-to-Wasm wrapper starts.

Builtins_JSToWasmWrapper() calls Builtins_JSToWasmWrapperAsm() generated by Builtins::Generate_JSToWasmWrapperAsm().

3664 | void Builtins::Generate_JSToWasmWrapperAsm(MacroAssembler* masm) { |

3534 | Register call_target = rdi; |

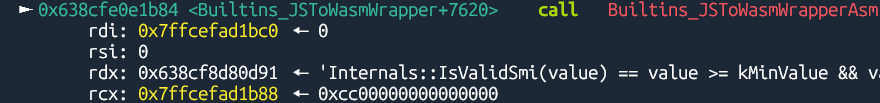

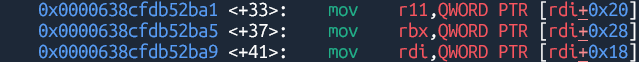

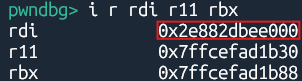

Builtins_JSToWasmWrapperAsm() loads the call target address into rdi, the parameter start address into r11, and the parameter end address into rbx.

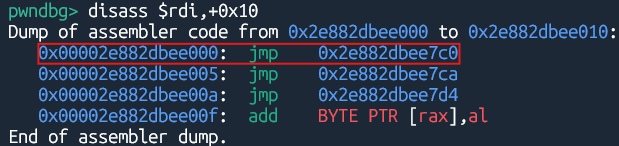

The call target points to the jump table entry corresponding to the invoked WebAssembly function.

35 |

|

3571 | int next_offset = 0; |

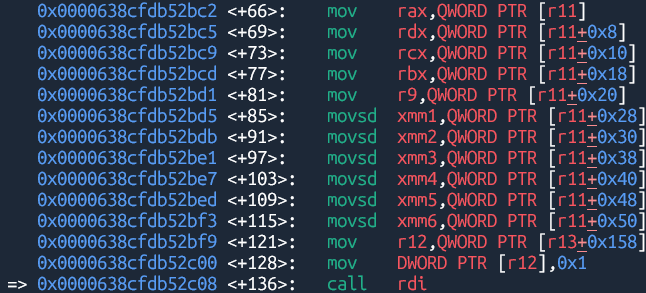

Then, Builtins_JSToWasmWrapperAsm() copies the parameters to the reserved registers, and jumps to the call target address.

WebAssembly Lazy Compilation

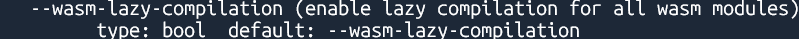

1562 | DEFINE_BOOL(wasm_lazy_compilation, true, |

With wasm_lazy_compilation enabled, WebAssembly functions are compiled when they are first called, rather than when the module is instantiated.

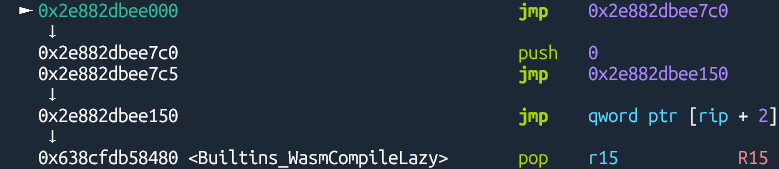

The jump table directs execution to Builtins_WasmCompileLazy() generated by Builtins::Generate_WasmCompileLazy().

3130 | // Push arguments for the runtime function. |

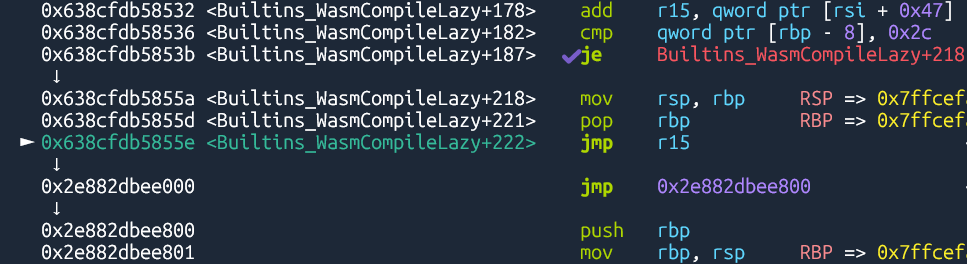

Builtins_WasmCompileLazy() calls Runtime_WasmCompileLazy() to compile the function.

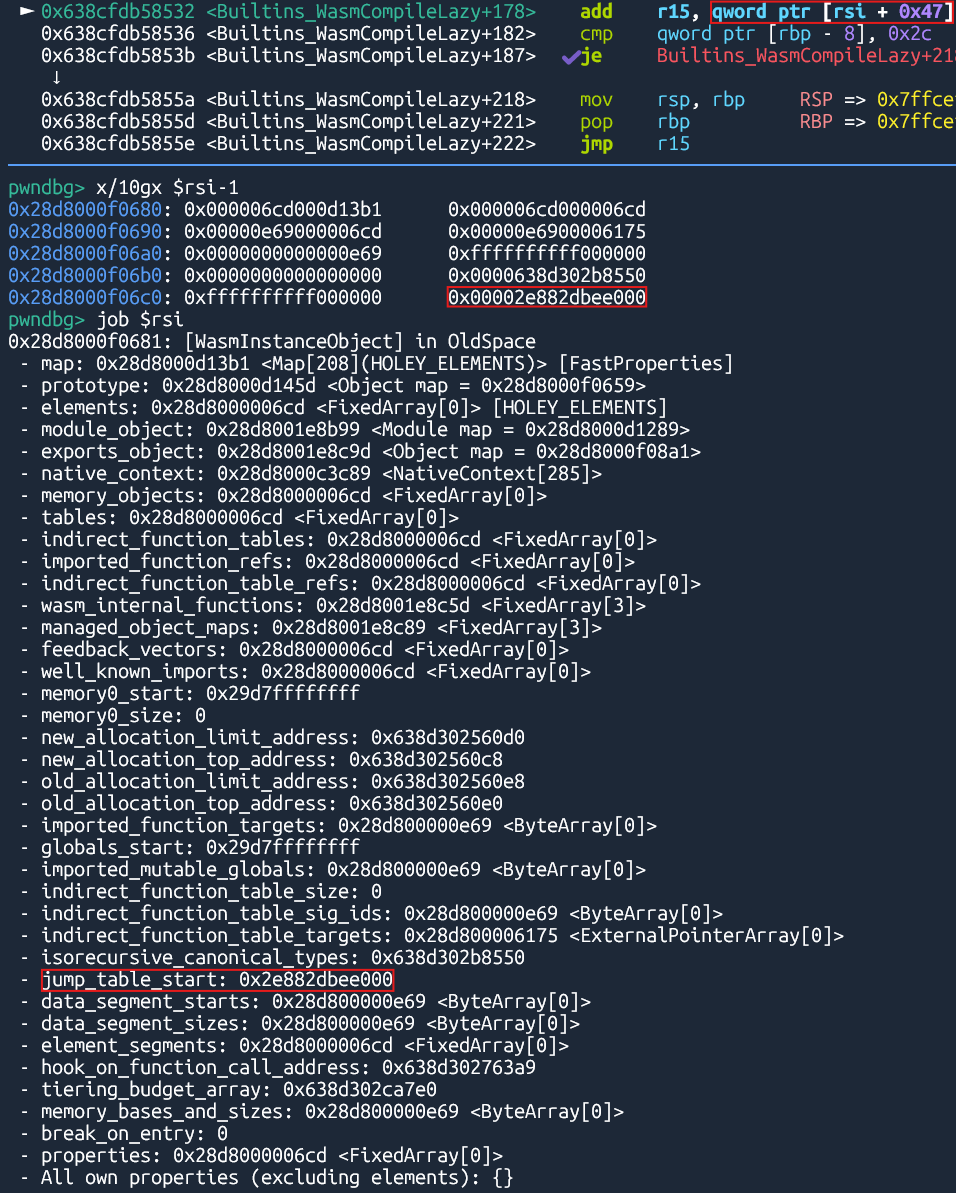

375 | DECL_PRIMITIVE_ACCESSORS(jump_table_start, Address) |

3143 | // After the instance register has been restored, we can add the jump table |

After the compilation is finished, Builtins_WasmCompileLazy() retrieves the function’s jump table slot address from the WasmInstanceObject, and jumps to that address. This time, the jump table transfers control to the function’s compiled code.

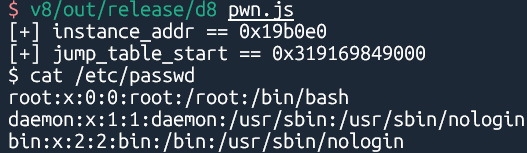

Since the WasmInstanceObject resides within the V8 sandbox, we can hijack control flow by overwriting the jump_table_start field with an arbitrary address before lazy compilation proceeds.

Exploitation

Bisection

[wasm] Enable lazy compilation by default (Nov 14, 2022)

This technique was introduced in the above commit, which enabled lazy compilation by default.

Patch

[wasm] Introduce WasmTrustedInstanceData (Jan 4, 2024)

This CL moves most data from the WasmInstanceObject to a new WasmTrustedInstanceData. As the name suggests, this new object is allocated in the trusted space and can hence hold otherwise-unsafe data (like direct pointers). As the Wasm instance was still storing some unsafe pointers, this CL closes holes in the V8 sandbox, and allows us to land follow-up refactorings to remove more indirections for sandboxing (potentially after moving more data structures to the trusted space).

The general idea is that during execution we mostly work with the WasmTrustedInstanceData object. This is passed as a direct pointer to Wasm functions and is stored in Wasm frames. The WasmInstanceObject is the JS-exposed wrapper, which also holds user-defined properties and elements.

The above commit moves some sensitive data including jump_table_start to the newly introduced WasmTrustedInstanceData, which resides outside the V8 sandbox. This prevents overwriting jump_table_start to hijack control flow.